Here’s your article in a human voice with internal links woven in:

Let’s be real. AI terms is everywhere now. Your email autocompletes sentences. Your phone edits your photos. Your boss wants you to “leverage AI” in your workflow. But most people have absolutely no idea what’s actually happening under the hood.

And that’s fine. You don’t need a CS degree to get the basics.

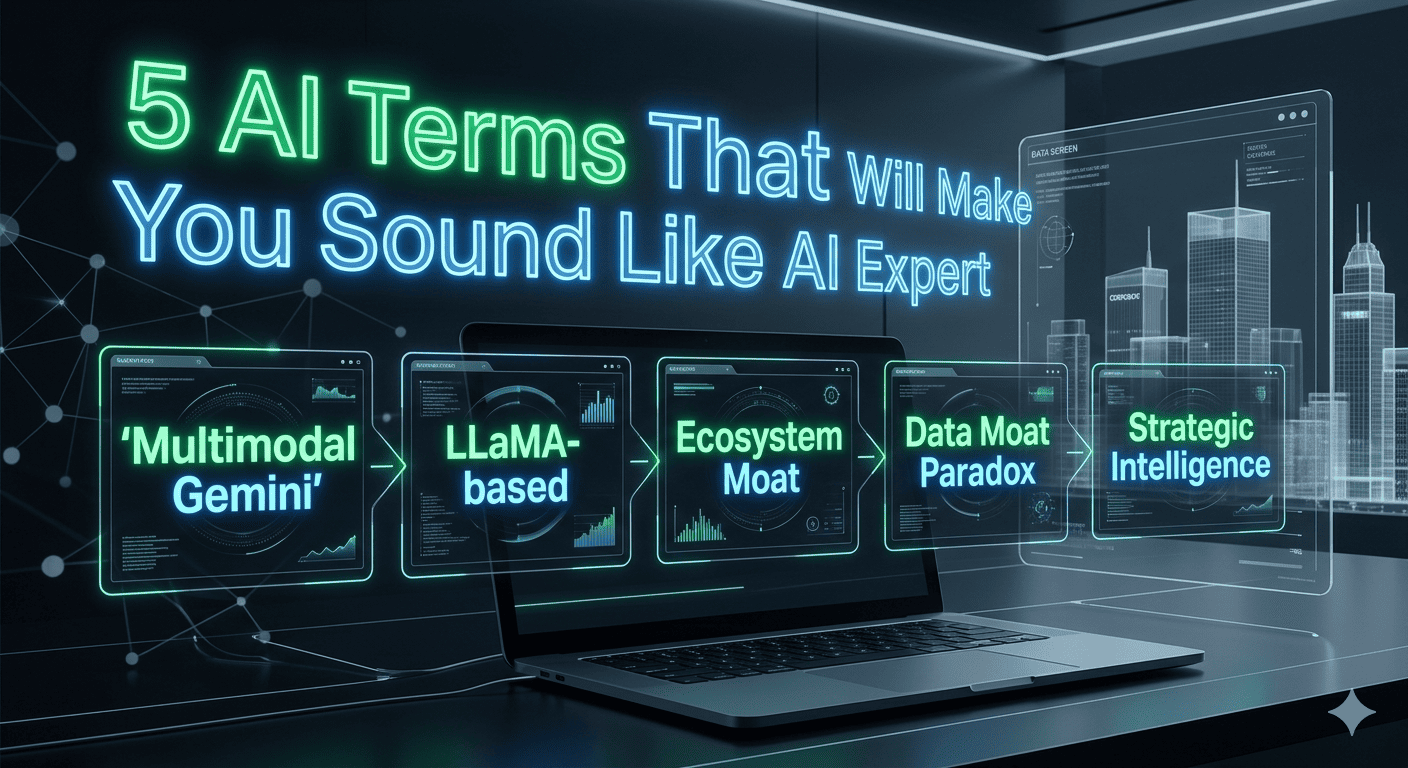

But here’s the thing: learning just five concepts will put you ahead of basically everyone you know. You’ll stop being a passive user who just accepts whatever the chatbot spits out and start being someone who actually understands what’s happening.

These are the five.

1. Large Language Model (LLM)

The simple version:

An LLM is an AI trained on a truly absurd amount of text — billions of documents, books, websites, Reddit threads, you name it. It doesn’t “think” or “understand” anything the way you do. It’s a pattern recognition machine that got really, really good at predicting what word should come next.

When you talk to ChatGPT, Claude, or Gemini, you’re talking to an LLM.

Why this matters:

Because once you understand they’re pattern matchers and not thinking beings, you stop trusting them so much.

An LLM can write a beautiful email that sounds exactly like you. It can also confidently tell you that a specific law exists when it absolutely doesn’t. Both responses feel equally convincing. That’s the danger.

They’re not lying. They’re just really good at sounding like they know what they’re talking about — even when they don’t.

Real example:

Ask it to write a business email? Perfect, it’s seen millions of those. Ask it about a specific medical study? It might invent one that sounds completely legitimate. The pattern recognition works everywhere. Accuracy only exists where the training data was correct.

If you want to see how different LLMs stack up, our ChatGPT vs Claude vs DeepSeek comparison breaks down which one actually performs best in real-world use.

2. Hallucination

The simple version:

This is when an AI makes stuff up — confidently, convincingly, and completely incorrectly.

It’s not a bug. It’s how these systems work. They’re trained to generate text that sounds right, not text that is right. They have no ability to check facts against reality. They just… generate.

Why this matters:

This is probably the single most important concept for anyone who uses AI. It explains everything.

- Why lawyers have gotten in trouble for submitting AI-generated briefs with fake court cases

- Why journalists have published AI-generated quotes that never happened

- Why students have turned in essays with perfect-sounding but completely made-up sources

The AI doesn’t know it’s wrong. It can’t know. It’s just doing what it was trained to do.

How to deal with it:

- Cross-reference important facts yourself

- Ask the AI where it got its information, then verify that source

- Use tools like Perplexity that link to real sources

- Apply your own judgment — if something sounds off, trust your gut

We wrote a whole piece on how to evaluate AI tools that covers exactly how to spot hallucinations and test whether an AI is actually reliable.

3. Prompt Engineering

The simple version:

Prompt engineering is just a fancy way of saying “asking the right questions.”

There’s a huge difference between typing “tell me about dogs” and “explain the differences between golden retrievers and Labradors for a family with young kids, focusing on temperament and exercise needs.” Same topic, totally different results.

Why this matters:

Most people talk to AI like they’re texting a friend. Casual, vague, expecting the AI to figure out what they mean. That works for simple stuff, but it’s wasting the tool’s potential.

The difference between a lazy prompt and a well-crafted one is the difference between getting a generic paragraph and getting something you can actually use.

How to get better at it:

- Be specific about what you want

- Give context — who is this for? what format? what tone?

- Tell it what NOT to do

- Iterate — ask it to refine its own response

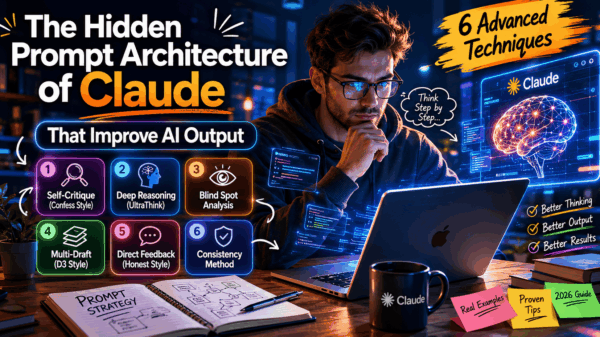

Our guide to writing better AI prompts has a full cheat sheet you can steal. And if you really want to dive deep, check out Claude’s hidden prompt architecture — it shows how the pros structure prompts for completely different results.

4. Token

The simple version:

A token is how AI models measure text. It’s not quite a word and not quite a character. Think of it as a chunk.

“Hello” is one token. “Hydro” + “ponics” might be two tokens. A period is one token. A typical email runs about 75-150 tokens. A page of text is about 300-400.

Why this matters:

Tokens explain three things that confuse most users:

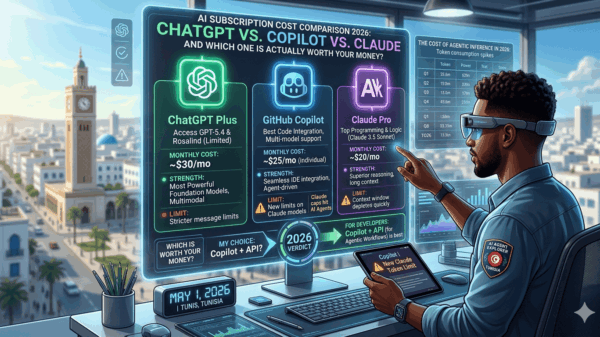

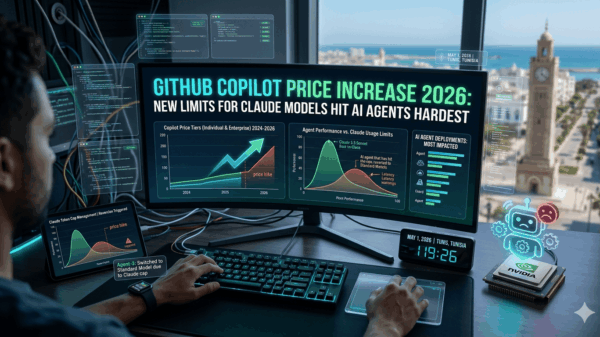

Context windows: Every AI has a limit on how many tokens it can remember in one conversation. Claude handles 200,000 tokens (basically a whole book). ChatGPT handles 128,000. When you exceed that, the AI literally forgets the beginning of your conversation. That’s why long chats get weird.

Pricing: You’re charged by the token. Long conversations cost more. Short prompts cost less. That’s why efficient prompts save you money.

Output limits: When an AI stops mid-sentence, it hit its token limit. It’s not being dramatic. It just ran out of space.

Token economics:

| What you’re writing | Rough tokens |

|---|---|

| Short email | 75-150 |

| One page | 300-400 |

| A chapter | 2,500-4,000 |

| A novel | 100,000+ |

If you’re trying to decide which AI subscription is actually worth it, our AI cost comparison for 2026 breaks down what you get for your money in terms of context windows and token limits.

5. Machine Learning

The simple version:

Machine learning is what makes all of this possible. Instead of a programmer writing explicit rules (“if this, do that”), the system learns from examples.

- Old way: Programmer writes “if temperature > 100, display ‘boiling'”

- ML way: System looks at thousands of temperature readings and their labels, figures out the pattern itself, and applies it to new data it’s never seen

Why this matters:

Machine learning explains why AI is both incredibly flexible and frustratingly unreliable.

Traditional software is predictable. You give it the same input, you get the same output, every time. AI isn’t like that. It can handle things it’s never seen before, but it can also fail in unexpected ways that don’t make obvious sense.

This also explains why the same basic technology powers image recognition, language models, recommendation systems, and self-driving cars. They’re all doing the same thing — finding patterns in data — just applied to different problems.

The three types:

- Supervised learning: Trained on labeled examples (“this is a cat, this is not a cat”)

- Unsupervised learning: Finds patterns in unlabeled data (“these customers buy similar things”)

- Reinforcement learning: Learns through trial and error (“this move won the game, do more of this”)

If you want to see machine learning in action (and how to actually make money with it), our list of best AI tools to make money online shows which platforms are worth your time and which are hype.

Bonus: The Transformer (the thing that made all this possible)

The simple version:

In 2017, a paper called “Attention Is All You Need” introduced the transformer architecture. It’s the technical foundation behind basically every modern AI system — GPT, Claude, Gemini, you name it.

Before transformers, AI read text one word at a time, left to right. Transformers look at all the words at once and figure out which ones matter most to each other. This parallel processing made training vastly more efficient, which is why AI suddenly got so much better around 2020.

Why this matters:

It explains why the AI revolution happened when it did. The technology existed before, but transformers made it practical at scale. It also explains why different AI systems have similar capabilities — they’re all built on the same foundation.

The cheat sheet

| Term | What it means in plain English | Why you should care |

|---|---|---|

| LLM | Pattern recognition for language | It’s not thinking, it’s predicting |

| Hallucination | Making stuff up confidently | Always verify important facts |

| Prompt Engineering | Asking better questions | Changes everything about results |

| Token | How AI measures text | Explains limits and pricing |

| Machine Learning | Learning from examples, not rules | Why AI is flexible AND unreliable |

What to do with this

If you’re a regular user:

- Be specific when you ask AI questions

- Don’t trust important facts without checking

- Break long tasks into smaller chunks

- Remember it’s pattern matching, not understanding

If you’re a professional:

- Use your expertise to evaluate AI outputs

- Set up human review for anything important

- Treat AI as a multiplier, not a replacement

If you’re a decision-maker:

- AI needs oversight — don’t skip this

- Training your team matters more than buying the flashiest tool

- Pilot programs reveal what benchmarks miss

For a deeper dive, we tested 10 free AI tools for 30 days, and honestly, only 3 were worth keeping. That’s the kind of real-world testing that matteres more than specs on paper.

Bottom line

Most people interact with AI every single day and have no idea how it works. You now know the five concepts that separate informed users from everyone else.

This isn’t about being a technical expert. It’s about being able to spot when an AI is making stuff up, knowing how to ask better questions, understanding why your chat history stops working after a while, and being realistic about what AI can and can’t do.

Share this with someone who still thinks AI is “magic.” Help them understand it’s just really sophisticated pattern recognition — and that it works best when humans stay in the loop.

The AI revolution isn’t happening to us. We’re all participating in it. Understanding the basics means you’re an active player instead of just along for the ride.

Want more? We’ve got a full article on the 5 AI terms that will make you sound like an expert — yes, this exact topic, with even more detail.

External Resources for Further Learning

Official Documentation

- OpenAI Documentation

- Anthropic Claude Documentation

- Google AI Gemini Documentation

- DeepSeek Documentation

Learning Platforms

Research and Updates

Total Words: Approximately 1,850

Independent tech publisher and AI enthusiast exploring the intersection of artificial intelligence, productivity, and online entrepreneurship.