I’m going to tell you something embarrassing.

For about six months, I genuinely believed em dashes were the smoking gun. Every time I saw one — whoosh — I’d nod knowingly and think “yep, that’s ChatGPT.” I’d spot them in LinkedIn posts, in blog articles, in my own writing. I became that annoying person who pointed them out at dinner.

Then I went back and read some of my own pre-2023 writing.

Turns out, I’ve been using em dashes like a maniac for years. So have most professional writers. Stephen King uses them. Joan Didion built a career on them. Magazine editors love them because they’re actually useful.

The dash was never the real signal. It was just a decoy everyone got obsessed with, while the actual AI writing detection signals were sitting in plain sight all along.

What Everyone Got Wrong

Here’s what happened: ChatGPT got popular, people noticed it used em dashes a lot, and the internet did what the internet does — turned a small observation into an absolute rule. Prompt engineers started adding “no em dashes” to their custom instructions. LinkedIn influencers posted threads about it. Someone probably wrote a book.

Meanwhile, the actual patterns that expose AI writing never got the same attention.

I spent three years tracking this stuff across hundreds of client accounts. Thousands of drafts. And the single biggest tell isn’t punctuation. It’s structural predictability. Your text reads like it was built from a template rather than thought through by a human.

The em dash thing was always a distraction.

Most discussions around how to spot AI text focus on superficial markers like punctuation, while ignoring deeper structural patterns that actually define AI-generated writing.

The Thing That Actually Gives AI Away

Here’s the most important pattern in modern AI content signals that nobody talks about enough.

Human writing is messy. We write a punchy three-word sentence, then follow it with a meandering forty-word monster that wanders through three clauses and forgets where it was going. We get excited and compress. We pause and expand. We change our minds mid-sentence — sometimes mid-word.

AI doesn’t do any of that.

AI writing has a rhythm. A predictable, steady, metronomic rhythm. Most sentences land between 15 and 25 words. Each paragraph gets roughly the same attention. Each section gets the same depth. It reads like someone set a cruise control button on the prose.

There’s actually a technical term for this: low burstiness. It means the variance in sentence length is minimal. Everything is the same. Everything is balanced. Everything is — and this is the word that matters — uniform.

And uniformity is the thing humans almost never produce naturally.

The Five Signals I Actually Look For

After going through enough AI text to develop eye strain, here are the patterns that actually mean something.

1. Sentence uniformity. If I can read the first half of a paragraph and predict the exact length and shape of the second half, something’s off. Most AI sentences sit in a narrow band — say 15-25 words — with very few outliers. Real writing has outliers everywhere.

2. Vocabulary clusters. AI models have comfort words. Not because they’re the best words, but because they show up constantly in training data. You’ll see delve, showcase, underscore, pivotal, landscape, tapestry, leverage, utilize — and phrases like “in today’s rapidly evolving world.” One of those is fine. Five in the same paragraph? That’s a signal.

3. The hedging habit. AI hedges constantly. “It’s important to note that…” “While there are many factors to consider…” “This can potentially…” “Generally speaking…” Human writers commit to statements. AI puts a seatbelt on every claim.

4. Vague abstraction. AI picks conceptual words over concrete details. It writes about “enhanced user engagement” instead of “users laughed at my app.” It discusses “robust functionality” instead of “the button that saves three clicks.” The text sounds important without saying anything specific.

5. The democratic paragraph. If an article covers four factors, each one gets roughly the same word count. Same depth. Same attention. Human writers don’t do this. We find one angle interesting and go deep. We rush through stuff we’re less excited about. We have favorites. AI doesn’t.

If you want to go deeper on how to actually evaluate AI output, I wrote about how to evaluate AI tools — same thinking, different application.

What Detection Tools Are Actually Measuring

Modern detectors don’t look for “AI words.” They measure stylometric patterns — the statistical fingerprints of how text behaves.

Research has identified a whole set of features: sentence length variance, paragraph length variation, vocabulary behavior, grammar construction, punctuation patterns, and content markers like hedging density and buzzword frequency.

No single feature proves anything. But clusters of patterns — structural uniformity plus vocabulary clusters plus excessive hedging — are strong indicators.

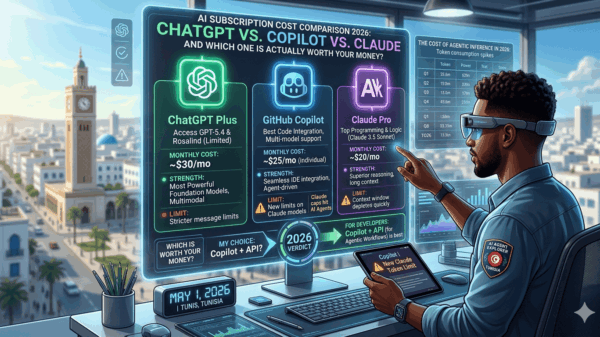

The best tools hit around 70% accuracy. That remaining 30% matters more than most people realize. Short text screws them up. Mixed authorship fools them. And increasingly sophisticated models slip through.

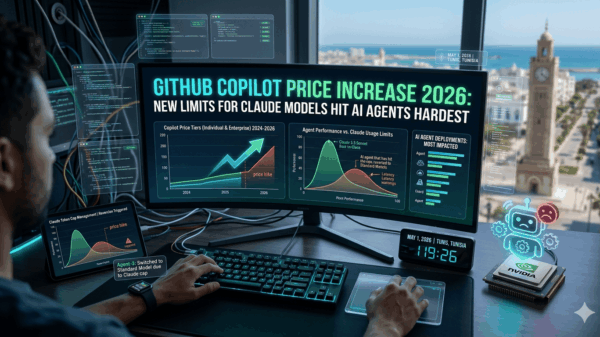

There’s a bigger conversation here about whether AI is becoming too expensive and what that means for how we use these tools — but for now, the detection piece is the one most people get wrong.

Modern systems used for AI writing detection don’t rely on single words or punctuation — they analyze deep statistical patterns in language behavior.

The Problem Nobody’s Talking About

Here’s the uncomfortable part.

AI detection tools flag non-native English speakers at alarming rates — some studies show up to 70% false positives. The US Constitution has been flagged as AI-written. If your writing is clear, structured, and correct, there’s a non-zero chance a detector will call it machine-made.

The irony is brutal: the same patterns that weaken writing — monotony, vagueness, formulaic structure — are the same patterns that trigger detection. And the same patterns that make text more readable and correct are also more likely to be flagged.

So the real question isn’t “how do I avoid detection?” It’s “how do I make my writing better?”

How to Fix It (Whether You Used AI or Not)

If you’re editing AI output — or just want your own writing to sound less robotic — the fix is the same.

Vary your sentence length. On purpose. Add a four-word sentence. Write a forty-word one. Create rhythm changes.

Replace abstractions with specifics. Find every vague phrase and ask: what does this actually mean? Replace “enhanced user engagement” with what actually happens.

Cut the hedges. Read every “it’s important to note that” and delete it. If the statement stands without the cushion, keep it standing.

Bury some sections. Give one factor more attention and another less. That’s what humans do.

Add real details. Names, numbers, specific examples. AI avoids these because it can’t verify them. Your job is to bring them in.

Read it out loud. AI content often reads fine silently but sounds wrong spoken. Your ear catches things your eyes miss.

I wrote about how to write better AI prompts that covers the other side of this — how to get better output in the first place so you have less to fix.

The Thing I Keep Coming Back To

Everyone obsessed over em dashes while the real tells sat in plain sight. Not because the dashes were completely irrelevant, but because they were easy to spot. They gave people a simple rule in a complicated space.

Real AI detection isn’t simple. It’s statistical. It’s probabilistic. It’s about clusters of patterns, not single signals.

And honestly? The best way to avoid writing like AI is to just be a better writer. Vary your rhythm. Get specific. Have opinions. Sound like a person who actually cares about what they’re saying.

Works every time.

If this made you think differently about how you write, the hidden prompt architecture of Claude might surprise you — it’s the other side of the same coin.

If you understand these AI content signals, you don’t just improve detection accuracy — you also become a better writer overall.

FAQ (Frequently Asked Questions)

Can AI writing be detected accurately?

AI writing detection is not 100% accurate. Most modern tools rely on statistical patterns such as sentence structure, vocabulary distribution, and hedging frequency. However, studies show that even the best detectors have an accuracy rate of around 70%, meaning false positives and false negatives are still common. Human-like writing styles or edited AI content can easily bypass detection systems.

What is burstiness in writing?

Burstiness refers to the variation in sentence length and structure within a text. Human writing naturally has high burstiness — a mix of short, long, and irregular sentences. AI-generated text often has low burstiness, meaning sentences tend to follow a similar length and rhythm, making the writing feel more uniform and predictable.

Do em dashes really indicate AI?

No, em dashes are not a reliable indicator of AI-generated content. They are widely used in professional human writing, including authors like Stephen King and Joan Didion. While AI models may use them frequently, the presence of em dashes alone does not prove anything. The real indicators of AI writing are structural patterns like uniform sentence length, repetition, and lack of specificity.

Independent tech publisher and AI enthusiast exploring the intersection of artificial intelligence, productivity, and online entrepreneurship.